Most industrial organizations begin with the primary objective of preventing serious harm and ensuring the safety of their workforce.

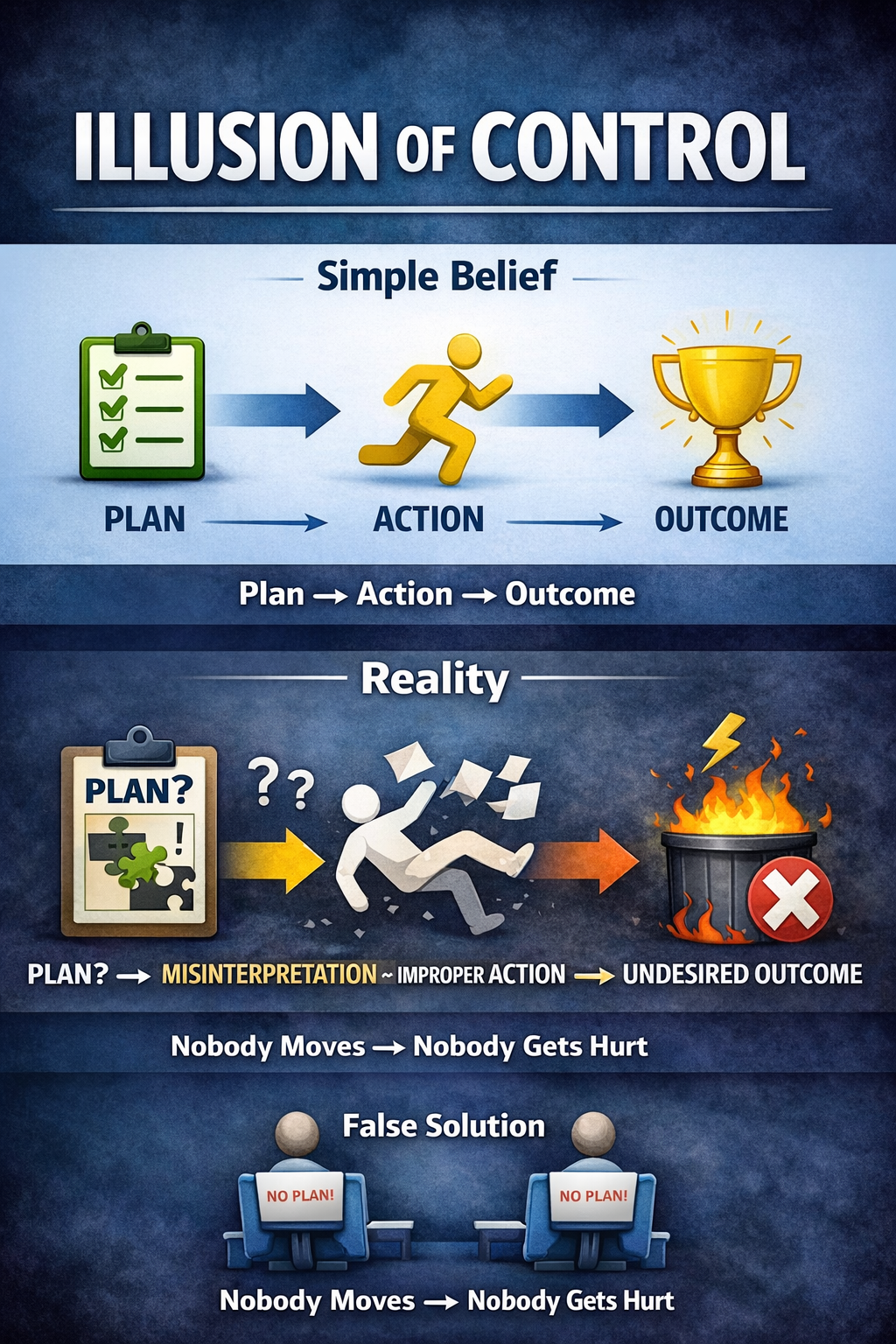

Over time, this objective often evolves into a prevailing belief:

“If we control movement tightly enough, nothing bad will happen.”

Nobody moves, nobody gets hurt.

This approach appears responsible and conveys decisiveness.

However, this perception can be misleading.

Safety dashboards reinforce this notion by equating fewer incidents with success. When adverse events occur, organizations typically investigate the failure, identify the root cause, retrain personnel, and implement stricter procedures. The underlying logic assumes that deviation is the primary cause of incidents, and therefore, increased compliance is the solution.

This represents the illusion of control.

Administrative controls do not equate to effective field control. Procedures typically describe work as it is imagined, whereas actual operations occur under dynamic conditions such as variable weather, access limitations, sequencing challenges, equipment performance, time constraints, and coordination among multiple trades. No standard operating procedure can anticipate every possible interaction among personnel, energy sources, and contextual factors.

Misinterpretation, Not Misbehavior

Behavioral science has consistently emphasized this distinction for several decades.

- Daniel Kahneman showed that humans don’t fail because they’re careless; they fail because they rely on mental shortcuts when information is incomplete or ambiguous.

- Dan Ariely demonstrated that context and framing drive decisions more than rules.

- Charles Duhigg showed that cues and routines—not policies—govern behavior under pressure.

In industrial settings, undesired outcomes seldom result from intentional violations of established rules.

Instead, such outcomes often arise from misinterpretation of information, including:

- Signals that are unclear

- Conditions that change faster than procedures

- Conflicting goals (production, quality, schedule)

- Gaps between training scenarios and real situations

Successful outcomes are typically attributable to worker skill rather than chance.

Lessons from Abraham Wald for Safety Management

During WWII, analysts studied returning aircraft to decide where to add armor. The damage patterns were obvious—wings and fuselage. The instinct was to reinforce those areas.

Abraham Wald saw the flaw.

The data only showed where planes could be hit and still return.

The missing data—the planes that never came back—revealed where reinforcement actually mattered.

Safety management often replicates this analytical error.

We study incidents and near misses endlessly.

However, there is limited focus on analyzing critical tasks that are successfully completed under challenging conditions.

What We Should Be Studying Instead

Most high-risk work succeeds every day:

- High-voltage switching

- Confined space entries

- Live troubleshooting

- Lifts, isolations, energizations, commissioning

Such success is attributable to workers who:

- Detect weak signals early

- Re-interpret information when conditions change

- Adjust sequencing instinctively

- Know when to stop, escalate, or redesign the task

These capabilities often remain unrecognized within systems that focus exclusively on incident analysis.

A Practical Example from Industry

Scenario: High-voltage troubleshooting at a substation during commissioning.

The SOP assumes:

- Accurate drawings

- Correct labeling

- Stable configuration

- Clear isolation boundaries

In reality:

- As-built drawings lag

- Temporary jumpers are installed

- Multiple crews are working nearby

- Time pressure is real

The prevention of an incident was not due to adherence to the standard operating procedure.

It was a technician noticing:

- A breaker position that didn’t match the expected status

- An unusual relay indication

- A mismatch between the physical layout and the drawings

The technician paused, revalidated the energy state, requested a second verification, and delayed the task.

Nothing happened.

No incident.

No report.

However, such moments provide invaluable insights for safety improvement.

Designing Systems for Capability Rather Than Solely for Compliance

Focusing exclusively on failures reinforces controls that address only rare events.

By studying successful outcomes, organizations can design systems that function effectively under actual operating conditions.

This means:

- PJHAs should capture how skilled workers detect and resolve ambiguity, not just hazards.

- SOPs should document decision points, not just steps.

- Skill development should focus on pattern recognition, signal detection, and judgment—not memorization.

- Training scenarios should include incomplete information, conflicting cues, and changing conditions.

Human judgment should not be viewed as a problem to be eliminated.

Rather, it serves as the control mechanism that prevents harm when procedural rules are insufficient.

The Critical Shift in Safety Perspective

Safety I asked: Why did this fail?

Safety II asks: How does this usually succeed?

The objective is not to achieve fewer incidents by chance.

The aim is to achieve safer outcomes through intentional system design.

“Nobody moves, nobody gets hurt” is not a rule to enforce.

It’s the result of systems that:

- Respect energy

- Acknowledge context

- Support real-time decision-making

- Build competence, not just compliance

This approach reflects the true nature of effective control in safety management.